3.3. One-Step BuildAs your web application progresses, you move from working on a single live version to two or more copies in order, to avoid breaking your production site while doing development work. At this point, a process arises for getting code into production. This process, referred to variously as building, releasing, deploying, or pushing (although these terms mean subtly different things), is something you'll perform over and over again. As time goes on, this process also typically becomes more complex and involved. What was once a manual copy process grows to involve changing files, restarting services, reloading configuration files, purging caches, and so on. The time at which you release a new feature or piece of code is often the time at which you're under the most pressureyou've been working like crazy to get it done and tested and want to push it out to your adoring public as soon as you can. A complex and arcane build processin which making mistakes or missing steps causes damage to your applicationinvites disaster. A good rule of thumb for each stage of the build process is to have a single button that performs all of the needed actions. As time goes by and your process becomes more complicated, the action required to perform these steps remains a simple button push. We'll explore that evolution now. 3.3.1. Editing LiveWhen you first start development on a web application, it's probably installed on your desktop or laptop machine. You can edit the source files directly and see the results in your browser. It's a short hop from there to putting the application onto a shared production server and pointing the assembled masses at it. Hopefully, by this point your code is in source control, so each change you make is tracked and reversible. The easiest way to make changes at this stage is to edit files directly. This might mean opening up a shell on your web server and using a command-line editor, mounting your web server's disk over NFS or Samba and using a desktop editor, or modifying files on your personal machine and copying them to the production server. Editing files this way is all very well, and often a sensible choice when you first start development and alpha release. You can very quickly see what you're doing, and your work is immediately available to your users. Fairly obvious large problems occur with this approach as time goes by. Any mistakes you make are immediately shown to your users. Features in development are immediately released, whether complete or not, as soon as you start linking them into the live pages of your site. 3.3.2. Creating a Work EnvironmentAs soon as you have any kind of serious user base, these sorts of issues become unacceptable. Early on you'll find you need to split your environments into two parts or, more often, three or more. The environment model we'll discuss has three stages, which we'll address individually: development (or "dev"), staging, and production (or "prod"). 3.3.2.1. Developmentdevelopment environment is your first port of call when working on anything new. All new code is written and tested in development. A good development environment mirrors the production environment but doesn't overlap with it in any way. This tends to mean having it on a physically separate server (or later, set of servers), with its own database and file store. Until your application grows to substantial size and complexity, a single server usually suffices for a full development setup. Although the server will contain all of your production elements, each development element can be much smaller than the final versiona database with a much smaller dataset, a file store with much fewer filesand so, much faster to work with. Only developers can see the development environment, so new features can be worked on without tipping off users. Features that take a long time to integrate with existing features can be worked on gradually and prepared for release in a single push. 3.3.2.1.1. Personal development environmentsFor small teams, a single development environment will usually suffice, but as the team grows, so must the environment. With many developers working simultaneously, not stepping on each other's toes becomes an issue. At this stage, allowing individual developers to run their own development environments (on their own desktops or laptops) allows for much greater autonomy. In a multiple development environment situation, it is often still useful to have a single shared development environment in which developers contribute their finished pieces of work to make sure their work functions alongside that of their colleagues. 3.3.2.2. StagingHaving split out the production and development environments, you could be forgiven for thinking there's now enough of a disjoint between the two. But when a new feature has been developed and checked into source control, the need for testing the current release of the site quickly becomes apparent. The development environment does not fill this role well for a couple of reasons. First, it's likely that the development environment will not be in sync with source control (as features in development may not yet be committed), so testing does not give an accurate impression of how the current source control trunk will behave. Neither is it desirable to keep the development environment in sync because code will only be releasable when all developers finish their work in sync, and then pause development for the same period while the code is tested and prepared for release. Second, the development hardware and data platform may react differently to the production equivalent. The usual suspect in this regard is database performance. Queries that perform fine on a small development dataset may slow down exponentially on the full production dataset. There are two ways of testing this: either replicate the production hardware and dataset or use the production hardware and dataset itself. The former is both expensive and complicated. As time goes by, your hardware platform will grow, and growing your testing environment at the same size doubles your costs. Once you have a substantially large dataset, syncing it to a test platform could take somewhere in the order of days. A staging environment takes a snapshot of code from the repository (a tag or branch specifically for release) and allows you to test it against production data on production hardware. This testing is still independent of actually releasing the code to users, and is the last stage before doing so. 3.3.2.2.1. Sub-stagingWhen you have specific features that can only be developed and tested against production data, it's sometimes necessary to create additional staging environments that allow the staging of code without putting it in the trunk for release. As your development team grows, the need for developers to test code against production data without committing it for release will increase, but at first there's a fairly simple compromise. A developer may just inform the rest of the team that they've committed code that isn't ready for release, stage and test it, and then roll back the commit to allow further stage and release cycles. 3.3.2.3. ProductionThe final stage of the process is the production environment. Production is the only environment that your users can see. Building your release tools in such a way as to force all releases to come from source control and go into staging provides a couple of important benefits. First, you are forced to test each new version of the code in the staging environment. Even if a full testing and QA round is not performed, at least basic sanity checks can be (such as that the site runs at all, with no glaring error messages). Second, every release is a tagged point in source control, so rolling back to a previous release is as trivial as getting it back onto staging and performing another deployment. 3.3.2.4. Beta productionAt some stage you may wish to release new features to only a subset of users or have a "beta" version of the application in which new features are trialed with a portion of the user base before going to full production. This is not the job for a staging environment and should rather be seen as a second production environment. Your build tools would then have a separate build target, perhaps built from a separate source control branch (so that bug fixes on your main production environment can continue). In fact, two production environments typically require two development and two staging environments, so that work can continue on both code branches. This probably means a lot of hardware and confusion for developers, so we'll leave that one for the huge teams to work out among themselves. 3.3.3. The Release ProcessWe've described the basic release process, but it's worth revisiting it in a step-by-step context. This process is repeated over and over again during development, whenever new code is ready for release, so becoming familiar with the process and understanding how you can streamline it early on give large benefits over time.

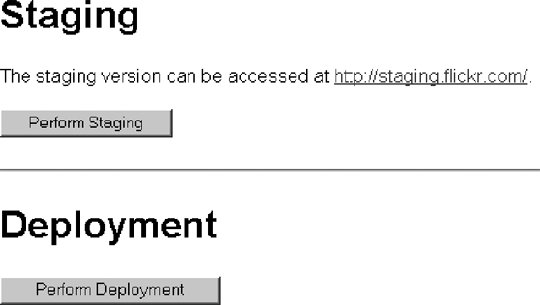

It is possible to automate the last two steps, and they are our focus for the rest of this section. Development, in terms of writing and committing new code, is not something we can (or would want to) automate. 3.3.4. Build ToolsBuild tools are the set of scripts and programs that allow you to perform steps two and three of the release process without having to manually open up a shell and type a sequence of commands. The build tools for flickr.com are accessed via a web-based control panel shown in Figure 3-1. We typically have two actions to perform (although there is a third we'll touch on in a moment): staging and deploying. The staging process performs a checkout of code from the head of a source-control branch (usually the trunk) into the staging environment. At this point, some mechanical changes may need to be applied to the checked out files, such as modifying paths and config parameters to point to production assets. Staging can also be the point at which temporary folders need to be created and have their permissions set. Figure 3-1. Control panel for build tools In order to allow configuration files for development and production to both be kept in source control, there's a simple trick you can use. Create two configuration files. One file will include your entire base configuration, which doesn't vary between environments, and also contains your development environment settings (Example 3-3). The second configuration file will contain specific staging and production settings (Example 3-4). Example 3-3. config.php

Example 3-4. config_production.php

Your application then loads config.php as its config. This works fine in the development environment. During the staging process, the config_production.php is concatenated onto the config.php file, giving the final configuration file shown in Example 3-5. Example 3-5. merged config.phpBecause the production settings follow the development settings, they override them. Only settings that need to be overridden should be added to the config_production.php file, so no configuration settings are ever duplicated, thus keeping configurations in sync. In cases in which your configuration file can't contain two definitions of the same setting (such as when using an actual configuration file as opposed to a configuration "script" as in the above example), then a simple script in the staging process can be used to remove settings from the first configuration file that are also present in the second, before merging the two files. The second step is to deploy the staged and tested code. The deploy step of the process is, again, straightforward. Tag the staged code with a release number and date, and then copy the code to production servers. Other steps may be necessary depending on your application. Sometimes configuration settings may need to be changed further, and web server configs reloaded. It's a good idea, as part of the deployment process, to write machine-specific configuration files to each of your web servers. When you have more than one web server in a load-balanced environment, it's useful for each server to be able to identify itself. It can greatly help debugging to output the server label in a comment on the footer of all served pages, since it allows you to find out quickly where your content is being served from. For flickr.com, in addition to including the server name in the general config file, we write a static file out during each deployment containing the server name and the deploy date and version (Example 3-6). Example 3-6. flickr.com server deploy status file

Another good idea, as part of both the staging and deployment processes, is to hold locks around both actions. This avoids two users performing actions at once, which might result in unexpected behavior. It is especially important to avoid performing a staging action during a deployment because fresh untested code might then be copied out to production servers. 3.3.5. Release ManagementTraditional software development teams often have a "release manager" responsible for packing code for release, overseeing development, bug fixing, and performing QA. When developing web applications, it can help to have one or two of your developers designated release managers. When a developer then has a piece of code ready for release, he can work with the release manager to stage, test, and deploy the code. Or that's the theory. When you're working in a small trusted team, allowing more developers to have control of the release process will help you to be more agile. When a developer wants to release a piece of code, she can stage and test it herself, verify with other developers that they haven't checked in any code that hasn't been tested or isn't ready for production, and then deploy straight away. This workflow doesn't scale to more than four or five developers because they would spend all their time checking each other's status, but for small teams, it can work very well. The key to allowing multiple developers to release code themselves and release it often is to reduce the amount of time the head of the main branch is in an "undeployable" state. They are a few ways to achieve this, each with its own advantages and disadvantages. You can avoid checking in any code until it's fully working in the development environment and then immediately test it on staging. If it fails, roll back in source control and start the process over again. If it works, you can deploy it, or at least leave the tree deployable. The huge downside to this is that you can't save your progress on a new feature unless it's completed. For complicated features that touch on many areas, this means that the developers are wildly out of sync with source control and will have to spend a long time merging when they're ready. It also means they can't checkpoint the code they're working on, in case they need to roll back to an earlier point in development of the same feature. It also makes it hard for more than one developer to work on a feature, since it needs to be all checked in at once and there's no rollback if people overwrite each other. All in all, not a great method. You can stay deployable by using branches. Any new feature gets developed on a new branch. When it's ready, the branch gets merged into the trunk and the feature can be released. There are clear downsides to this approach, too. In addition to the general cognitive overhead of having to branch and merge constantly, it becomes very difficult to work on more than one feature at once. Each feature branch needs to be checked out separately and have its own development environment. When a feature branch is complete, merging it into the trunk can be difficult, especially for features that have been in development for a long time. Periodically merging trunk changes into the branch can help solve this problem, but it doesn't eliminate the need for merges completely, which can cause problems in their own right. A slightly better (though still imperfect) solution is to keep everything in the trunk. When working on a new feature, wrap new feature code in conditional statements, based on a configuration option. Then add this option to both the base and production config files, enabling the feature in the development environment and disabling it in production. This allows continuous commits, avoids merges, and keeps the trunk deployable at all times. When the feature is ready to be deployed, you change the config option, stage, test, and deploy. The downside to this method is that you need to be careful when working on new features to ensure that they really are protected against appearing on the production platform. You also need to keep the old, existing code that the new feature replaces around, at least until after the new feature has been deployed. This can make the code size larger (and by extension, more complex) during new feature development and leaves a little more to go wrong. At each commit step, it's then important to check that you haven't either broken the old feature or unwittingly exposed any of the new version. Both of these latter two approaches can work well in practice and allow you to keep your trunk in a deployable state. For larger development teams, though, both of these methods start to break down. It becomes harder to constantly merge branches as the total number of revisions increases, and more time is spent in a deploy blocking state as developers commit and test their work. A practical solution for large teams is to continue using the above methods, but not to deploy from the trunk. When a deployment is desired, the trunk is branched. Developers then work to bring the branch up to release quality, and no new feature work is committed to it. Once the work is complete, the branch can be tested, deployed, and finally merged back into the trunk. During the branch cleanup and testing phase, regular (deployment blocking) work continues on the trunk as before, without affecting the deployment effort. 3.3.6. What Not to AutomateNot everything can or should be automated as far as production deployment goes, but there are still build tools that can make these processes easier. We'll discuss individually the two main tasks that can't be automated. 3.3.6.1. Database schema changesDatabase schemas need to evolve as your application does, but differ from code deployments in two very important ways: they're slow and they can't always be undone. These two factors mean that automatic syncing of development and production database schemas is not a good idea. The speed issue doesn't occur until a certain size of dataset is reached. It varies massively based on the software, hardware, and configuration, but a useful rule of thumb with MySQL is that a table with less than 100,000 can be changed on-the-fly, since the action will typically complete within a couple of seconds. Speed also varies depending on the number of indexes on the table and the other activity on the boxes, so any solid numbers should be taken with a grain of salt. Past a certain point, a modification to a large table is going to lock that table for a considerable time. At that point, the database table in question will need to be taken out of production while modifications are made. This step might be as simple as closing the site, or as complex as building in configuration flags to disable usage of part of the database, while allowing the rest of the site to function. The issue of reversibility applies regardless of the dataset size. A modification that drops a field loses data permanently. To create a fallback mechanism, table backups should ideally be taken before performing changes, but this is often impracticallarge tables require a lot of time and space, and any data inserted or modified between the backup and the rollback is lost. To avoid losing data in the rollback, the application (or, again, the feature set in question) must be disabled prior to taking the backup. With these issues in mind, we find we don't want to automate database changes, because each change must be carefully considered. What we can do, though, is make these changes as painless as possiblebetter living through scripts. A script that compares development schema to production schema and highlights any differences can be very useful when identifying changes that need to get propagated. It's only a small leap from there to having the script automatically generate the SQL needed to bring the two versions inline. Once you have the SQL to run, a one-click table backup means developers are far more likely to leave themselves with a fallback. After the SQL has been executed, another run of the first script can confirm that the two schemas are now in sync. 3.3.6.2. Software and hardware configuration changesThere are certain types of software and hardware configuration changes that are not suitable for automatic deployment. Hardware configuration changes, such as upgrading drivers, usually have a certain amount of risk. This kind of task is best done first on a nonproduction server, and a script later created to perform it on production servers. Production servers can then be taken out of production one by one, have the script applied to them, and have them re-enabled. Software configuration changes, especially to basic services like the web server and email server software, should be handled in a process outside of the normal application deployment procedure. Configuration files often differ from web application files in that the currently running version might not be the version currently on disk. When Apache starts, it reads its configuration file from disk and doesn't touch it again until it's stopped and restarted. A web server might be running fine, with errors in its configuration file that won't affect it until it's restarted. This can mean that although a version of the code and configuration files appear to be working fine, the configuration may not work when copied from a staging server to a production server, and the production server is asked to reload its configuration. This particular problem can be eliminated by forcing the configuration files to reload on the staging server before testing. While this step fixes the specific issue, there's a deeper problem with automatically deploying software configurations with the rest of the site code. Typically, the people writing application code are not the same people configuring software. Separating the web application deployment procedure from the software configuration deployment procedure means that the developers can focus on development and not have to worry about configuration issues, and the system administrator can work on system issues without having to worry about application code. What this does tell us, though, is that in an ideal world we'd create two staging and development frameworks: one for application development and deployment and one for configuration deployment. This is a great idea, and one that adds the same benefits to system administration as we already have with application developmenta single-click process for performing complex changes. The features that such a system might include are outside the scope of this discussion, but can be very similar to the application release framework. |